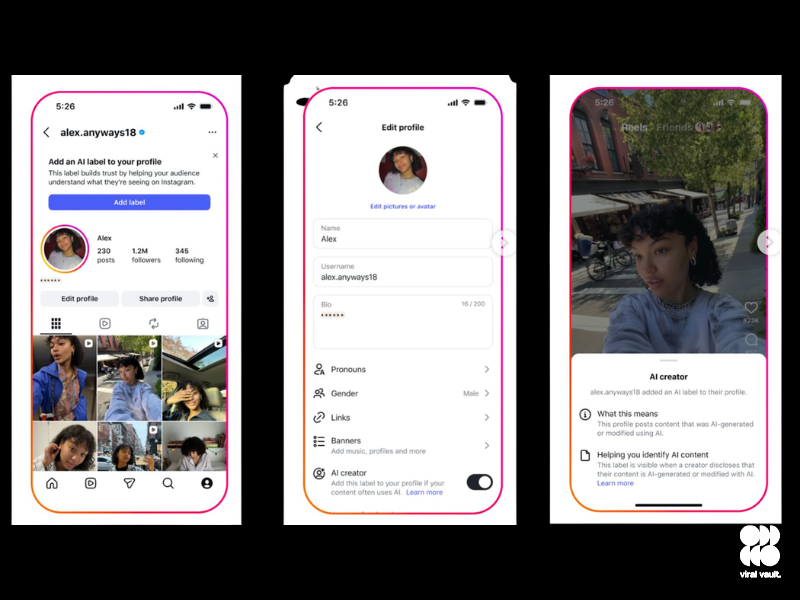

Instagram is testing a new optional label that would allow users to identify themselves as “AI creators,” signalling when their content is generated or significantly modified using artificial intelligence. The label is designed to appear across profiles, posts, and Reels – offering viewers more direct context than the platform’s existing “AI info” tags, which only flag that content may have been AI-assisted without clearly attributing it to a creator’s primary method of working.

The distinction matters. Where the current tagging system operates at the content level – applied post-by-post – the new label functions more like a creator identity marker, giving audiences a broader signal about the nature of a channel before they even engage with individual posts.

But the feature’s most significant limitation is baked into its design: it is entirely voluntary. Creators are under no obligation to adopt it, which means a substantial share of AI-generated content will likely continue circulating without any disclosure. Meta has acknowledged ongoing difficulty in reliably detecting AI content at scale across its platforms – a problem that an opt-in label does little to structurally address.

The move is framed as part of Meta’s wider transparency push, with the company actively encouraging creators who lean heavily on AI tools to self-identify. That framing, however, places the burden of honesty on the very people who may have the least incentive to carry it.

As AI-generated content grows harder to distinguish from human-made work, Instagram will almost certainly need to revisit whether voluntary disclosure is enough – or whether a more systematic approach is overdue.